You know that a technology has hit the mainstream when it appears in PCWorld. Such is the case for cloud computing, a topic PCWorld considers in its recent piece Amazon Web Services Sees Infrastructure as Commodity. Despite the rather banal title, this article makes some interesting points about the nature of commoditization and the effect this will have on the pricing of services in the cloud. It’s a good article, but I would argue that it misses an important point about the evolution of cloud services.

Of the three common models of cloud–SaaS, PaaS, and IaaS–it’s the later, Infrastructure-as-a-Service (IaaS), that most captivates me. I can’t help but begin planning my next great start-up built using the virtualization infrastructure of EC2, Terramark, or Rackspace. But despite a deep personal interest in the technology underpinning the service, I think what really captures my imagination with IaaS is that it removes a long-standing barrier to application deployment. If my killer app is done, but my production network and servers aren’t ready, I simply can’t deploy. All of the momentum that I’ve built up during the powerful acceleration phase of a startup’s early days is sucked away—kind of like a sports car driving into a lake. For too many years, deployment has been that icy cold water that saps the energy out of agile when the application is nearing GA.

IaaS drains the lake. It makes production-ready servers instantly available so that teams can deploy faster, and more often. This is the real stuff of revolution. It’s not the cool technology; it’s the radical change to the software development life cycle that makes cloud seem to click.

The irony, however, is that Infrastructure-as-a-Service is itself becoming much easier to deploy. It turns out that building data centers is something people are pretty good at. Bear in mind though, that a data center is not a cloud—it takes some very sophisticated software layered over commodity hardware to deploy and manage virtualization effectively. But this management layer is rapidly becoming as simple to deploy as the hardware underlying it. Efforts such as Eucalyptus, and commercial offerings from vendors like VMWare, Citrix, and 3Tera (now CA), and others are removing the barriers that have until recently stood in the way of the general-purpose co-location facility becoming an IaaS provider.

Lowering this barrier to entry will have a profound effect on the IaaS market. Business is compelled to change whenever products, process or services edge toward commodity. IaaS, itself a textbook example of a product made possible by the process of commoditization, is set to become simply another commodity service, operating in a world of downward price-pressure, ruthless competition, and razor-thin margins. Amazon may have had this space to itself for several years, but the simple virtualization-by-the-hour marketplace is set to change forever.

The PCWorld article misses this point. It maintains that Amazon will always dominate the IaaS marketplace by virtue of its early entry and the application of scale. On this last point I disagree, and suggest that the real story of IaaS is one of competition and a future of continued change.

In tomorrow’s cloud, the money will made by those offering higher value services, which is a story as old as commerce itself. Amazon, to its credit, has always recognized that this is the case. The company is a market leader not just because of its IaaS EC2 service, but because the scope of its offering includes such higher-level services as database (SimpleDB, RDS), queuing (SQS) and Content Delivery Networks (CloudFront). These services, like basic virtualization, are the building blocks of high scalable cloud applications. They also drive communications, which is the other axis where scale matters and money can be made.

The key to the economic equation is to possess a deep understanding of just who-is-doing-what-and-when. Armed with this knowledge, a provider can bill. Some services—virtualization being an excellent example—lend themselves to simple instrumentation and measurement. However, many other services are more difficult to sample effectively. The best approach here is to bill by the transaction, and measure this by acquiring a deep understanding of all of the traffic going in or out of the service in real time. By decoupling measurement from the service, you gain the benefit of avoiding difficult and repetitive instrumentation of individual services and can increase agility through reuse.

Measuring transactions in real time demands a lot from the API management layer. In addition to needing to scale to many thousands of transactions per second, this layer needs provide sufficient flexibility to accommodate the tremendous diversity in APIs. One of the things that we’ve noticed here at Layer 7 with our cloud provider customers is that most of the APIs they are publishing use fairly simple REST-style interfaces with equally basic security requirements. I can’t help but feel a nagging sense of déjà vu. Cloud APIs today are still in the classic green field stage of any young technology. But we all know that things never stay so simple for long. Green fields always grow toward integration, and that’s when the field becomes muddy indeed. Once APIs trend toward complexity—when it not just username/passwords you have to worry about, but also SAML, OAuth variations, Kerberos and certificates—that’s when API management layer can either work for you, or against you—and this is one area where experience counts for a lot. Rolling out new and innovative value-added services is set to become the basis of every cloud provider’s competitive edge, so agility, breadth of coverage and maturity is an essential requirement in the management layer.

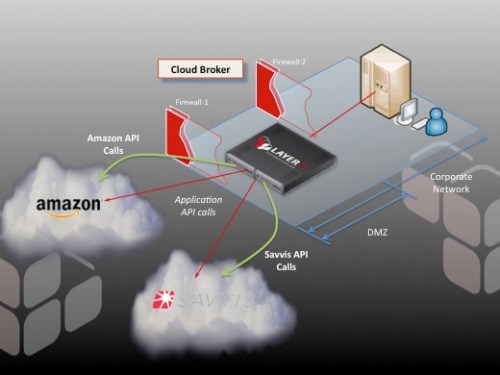

So to answer my original question, if you are a cloud provider, you will make money on the higher-level services. But where does that leave the rest of us? There is certainly money to be made around these services themselves, by building them, or providing the means to manage them. The cloud software vendors will make money by providing the crucial access control, monitoring, billing, and management tools that cloud providers need to run their business. That happens to be my business, and this infrastructure is exactly what Layer 7 Technologies is offering with its new CloudControl product, a part of the CloudSpan family of products. CloudControl is a provider-scale solution for managing the APIs and services that are destined to become the significant revenue stream for cloud providers—regardless of whether they are building public or private clouds.

You can learn more about CloudControl on Layer 7’s web site.