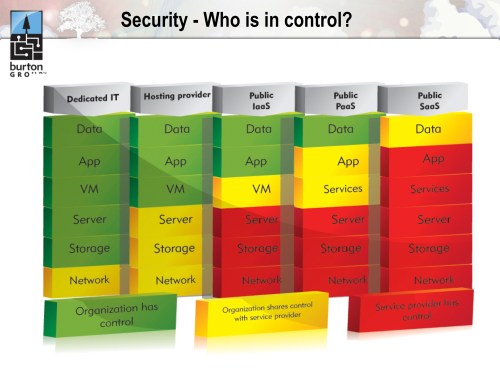

Two weeks ago, I delivered a webinar about new security models in the cloud with Anne Thomas Manes from Burton Group. Anne had one slide in particular, borrowed from her colleague Dan Blum, which I liked so much I actually re-structured my own material around it. Let me share it with you:

This graphic does the finest job I have seen of clearly articulating where the boundaries of control lie under the different models of cloud computing. Cloud, after all, is really about surrendering control: we delegate management of infrastructure, applications, and data to realize the benefits of commoditization. But successful transfer of control implies trust–and trust isn’t something we bestow easily onto external providers. We will only build this trust if we change our approach to managing cloud security.

Cloud’s biggest problem isn’t security; it’s the continuous noise around security that distracts us from the real issues and the possible solutions. It’s not hard to create a jumbled list of things to worry about in the cloud. It is considerably harder to come up with a cohesive model that highlights a fundamental truth and offers a new perspective from which to consider solutions. This is the value of Dan’s stack.

The issues in the cloud that scare us the most all fall predicatably out of the change in control this environment demands. Enterprise IT has carefully constructed an edifice of trust based on its existing on-premise security models. Cloud challenges these models. Cloud rips pieces from the foundation of this trust, leaving a structure that feels unstable and untrustworthy.

We cannot simply maintain existing security models in the cloud; instead, we need to embrace a new approach to security that understands the give-and-take of control that is inherent to the cloud. This demands we recognize where we are willing to surrender control, acknowledge that this conflicts with our traditional model, and change our approach to assert control elsewhere. Over time we will gain confidence in the new boundaries, in our new scope of control, and in our providers–and out of this will emerge a new formal model of trust.

Let’s consider Infrastructure-as-a-Service (IaaS) as a concrete example. Physical security is gone; low-level network control is gone; firewall control is highly abstracted. If your security model–and the trust that derives from this–is dependent on controlling these elements, then you had better stay home or build a private cloud. The public cloud providers recognize this and will attempt to overlay solutions that resemble traditional security infrastructure; however, it is important to recognize that behind this façade, the control boundaries remain and the same stack elements fall under their jurisdiction. Trust can’t be invested in ornament.

If you are open to building a new basis for trust, then the public cloud may be a real option. “Secure services, not networks” must become your guiding philosophy. Build your services with the resiliency you would normally reserve for a DMZ-resident application. Harden your OS images with a similar mindset. Secure all transmissions in or out of your services by re-asserting control at the application protocol level. This approach to secure loosely coupled services was proven in SOA, and it is feasible and pragmatic in an IaaS virtualized environment. It is, however, a model for trust that departs from traditional network-oriented security thinking, and this is where the real challenge resides.

Scott,

sorry for the length of the comment below, I am just thinking aloud 🙂

“Secure services, not networks” is a big paradigm shift. This shift is not so exciting for the customer. It basically means that you cannot TRUST network security any more, an now the full burden of the security falls on the service layer.

Up to now, when we use a service level security layer (let’s call all these devices SOA brokers), in a nutshell, you add Auth&Auth, XML threats protection, schema validation … on top of the network security. You still take network security for granted and you improve with a new layer of abstraction.

Now you are telling me that in the cloud, my network security net is so full of holes that I have to give up any trust, survice with bad guys in my backyard and trust only myself, putting a security broker in front of all my apps, with all the burden to configure them. Not so exciting!

I see some inherent contradictions between the interests of the various parties: customer, cloud provider and SOA broker provider (typ. L7).

The cloud provider has to convince the customer that a VPC is just as secure as its internal network. He/she can use a combination of layers 2-4 technologies, auditing, whatever … to push its point. OK, currently the industry is not yet there, but is there a real technical impossibility to establish trust at this level?

The SOA broker has some vested interest to undermine the confidence of the customer in the VPC security, to be able to sell more licences and services; this is not “bad”, it is just that e.g. L7 is naturally in such a position: it is more interesting to sell many SOA proxies than a central SOA broker, cross cutting internal and external clouds.

The customer is thus caught between 2 fires: who shall I trust, my cloud provider VPC, or my own teams administering a network of SOA proxies?

I have no definitive answer to this dilemna.

A somehow valid analogy is comparing your home with a safe, an hotel room a safe and a safe at the bank.

Which is the safest place to put your jewels?

Probably bank, then home, then hotel room.

So the challenge for cloud providers is to prove that their VPCS are more like a bank safe than an hotel safe. Trust is a complex matter where technology palys only a part.

The original blog post that my diagram appeared in is http://srmsblog.burtongroup.com/2009/06/cloud-computing-who-is-in-control.html. I’m working on another one right now!

That’s good news Dan. I’m anxious to see the new version.

Regards,

Scott

The Public and Private domains will always exist. The Trusted and Untrusted just became more obvious, the physical walls of your office or colo don’t make a difference. They were always a risk nonetheless.

Back up a bit. I host “public services”. I logically classify data first as public/private, then I specify public domain with host authentication, then the user sets up an account and we provide them with a pre-shared key. Phewww! Isn’t that secure enough? As long as we don’t just blindly trust the neighbours cloud and continue the same paranoid security logic we should be ok.

May I suggest we continue to allocate data to that service with only that which we intended the recipients to obtain. If we change the criteria of the shared data, re-evaluate the legitimacy of the existing user.

I have to admit in the web application server days, I provisioned data sources with full SQL rights because I was lazy and blindly trusted the sandbox security.

Nothing else has changed, except that we actually consider domain based security instead of only specific host or user security. Maybe we should dictate a sub-group security level. Where sem-public data requires a subgroup security allocation.

Maybe there is something to say for NSA’s MAC based SELinux! Too me it’s too granular. Stick with the public domain sub-grouping method! Read a pgsql-hackers take on it: http://tinyurl.com/yf8akby

I think you make a good point in that much of our existing security, in which we invest so much trust, often isn’t that trustworthy at all. Cloud forces us to confront our previous assumptions, and sometimes then we’re able to see them for what they really are. This is one of the things that I like about the whole cloud phenomena: it forces us to think about issues like security, trust, control, latency, etc that we were able to ignore–either rightly or wrongly–when building conventional on-premise apps.

Identity is one of those issues. How identity is communicated; how it’s bound to transactions; how it’s validated–all of these need careful consideration and potentially different approaches in the cloud, where localized directories or IAM systems may not be present, and hops between hosts carry risk. Look for a detailed post on this soon, because its one of the more interesting challenges in the cloud.

I like SELinux. It’s well thought out, but from our own experience, it’s not for everyone. You really need to design your applications around it to be effective. It’s great for a certain type of solution, but it’s definitely not for the mainstream.