Here at Layer 7 we get asked a lot about our support for REST. We actually have a lot to offer to secure, monitor and manage REST-style transactions. The truth is, although we really like SOAP and XML here at Layer 7, we also really like REST and alternative data encapsulations like JSON. We use both REST and JSON all the time in our own development.

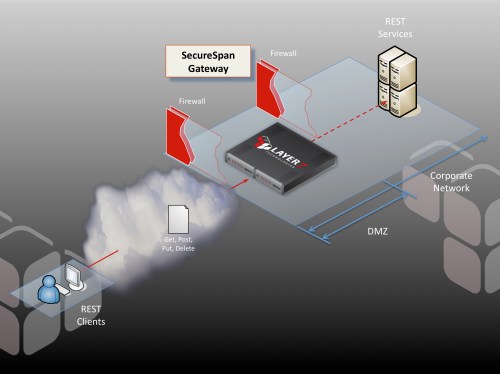

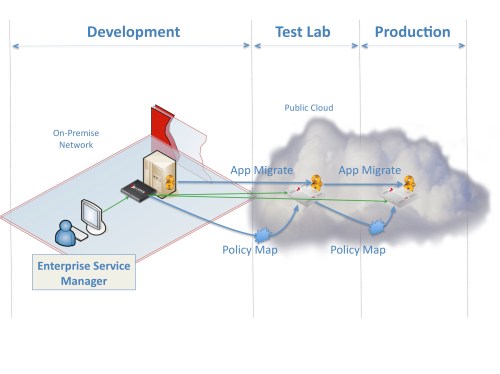

Suppose you have a REST-based service that you would like to publish to the world, but you are concerned about access control, confidentiality, integrity, and the risk from incoming threats. We have an answer for this: SecureSpan Gateway clusters, deployed in the DMZ, give you the ability to implement run time governance across all of your services:

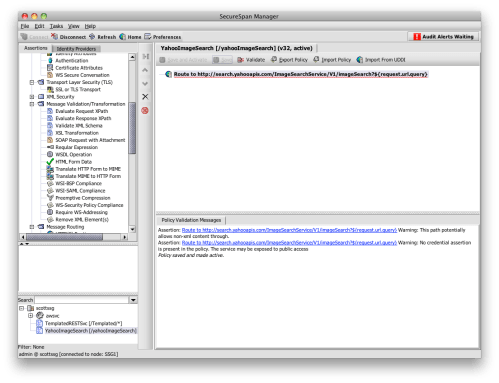

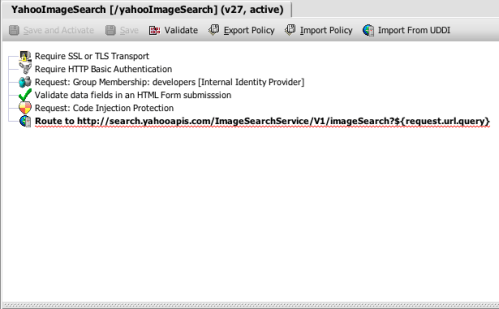

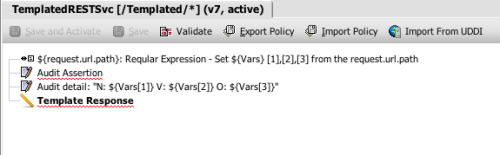

Pictures are nice, but this scenario is best understood using a concrete example. For the services, Yahoo’s REST-based search API offers us everything we need–it even returns results in JSON format, instead of XML. Yahoo has a great tutorial describing how to use this. The tutorial is a little dated, but it’s simple, to the point, and the REST service is still available. Let’s imagine that I’m deploying a SecureSpan Gateway in front of the servers hosting this API, as I’ve illustrated above. The first thing I will do is create a very simple policy that just implements a reverse proxy. No security yet–just a level of indirection (click on the picture for detail):

This is just about as simple as a policy can get. Notice that the policy validator is warning me about a few potential issues. It’s pointing out that the transaction will pass arbitrary content, not just XML. Because I’m expecting JSON formatted data in the response, this is the behavior I expect. The validation is also warning me that this policy has no authentication at all, leaving the service open to general, anonymous access. We’ll address this in the next step.

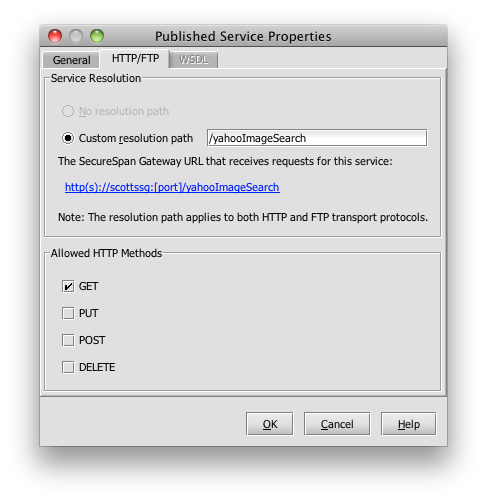

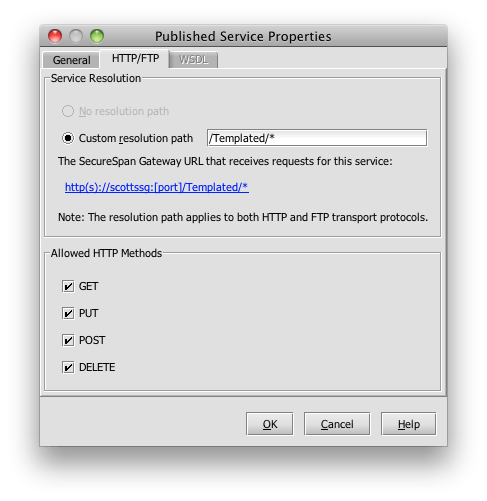

I’ve explicitly attached this policy to the gateway URL:

http://scottssg/yahooImageSearch

If need be, I could easily add wild card characters here to cover a range of incoming URLs. For this demonstration, I’m just running a virtual SecureSpan Gateway here on my Macbook; it’s not actually residing in the Yahoo DMZ, as would be the case in a real deployment. But from the perspective of an administrator building policy, the process is exactly the same regardless of where the gateway lives.

I’ve also placed a restriction on the listener to only accept HTTP GET verbs:

Now I can point my web browser to the gateway URL shown above, and get back a JSON formatted response proxied from Yahoo. I’ll use the same example in the Yahoo tutorial, which lists pictures of Madonna indexed by Yahoo:

http://scottssg:8080/yahooImageSearch?appid=YahooDemo&query=Madonna&output=json

This returns a list looking something like this:

{"ResultSet":{"totalResultsAvailable":"1630990", "totalResultsReturned":10, "firstResultPosition":1, "Result":[{"Title":"madonna jpg", ...

which I’ve truncated a lot because the actual list spans thousands of characters. The Yahoo tutorial must be fairly old; when it was written, there were only 631,000 pictures of the Material Girl. Clearly, her popularity continues unabated.

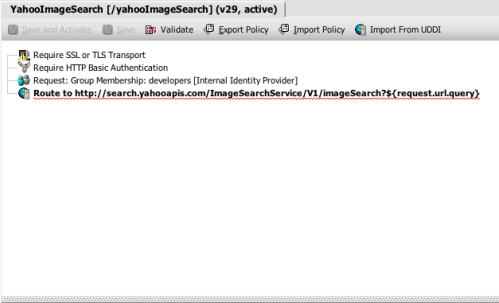

Now let’s add some security. I’d prefer that nobody on the Internet learns that I’m searching for pictures of Madge, so we need to implement some privacy across the transaction. I can drag-and-drop an SSL/TLS assertion into the top of my policy to ensure that the gateway will only accept SSL connections for this RESTful service. Next, I’ll put in an assertion that checks for credentials using HTTP basic authentication. I’ll use the internal identity provider to validate the username/password combination. The internal identity provider is basically a directory hosted on the SecureSpan Gateway. I could just as easily connect to an external LDAP, or just about any commercial or open source IAM system. As for authentication, I will restrict use of the yahooImageSearch REST service to members of the development group:

HTTP basic authentication isn’t very sophisticated, so we could easily swap this out and implement pretty much anything else, including certificate authentication, Kerberos, SAML, or whatever satisfies our security requirements. My colleague here at Layer 7, Francois Lascelles, recently wrote an excellent blog post exploring some of the issues associated with REST authentication schemes.

Let’s review what we this simple policy has given us:

- Confidentiality, integrity, and server (gateway) authentication

- Authentication

- Authorization

- Virtualization of the internal service, and publication to authorized users

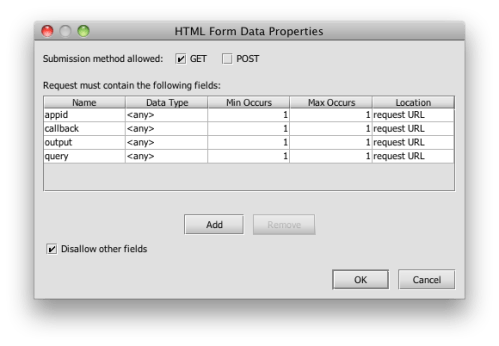

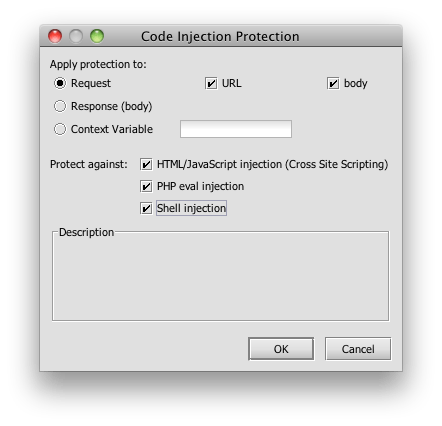

This is good, but I’d like to add some more REST-specific constraints, and to filter out potential REST attacks that may be launched against my service. I can do this with two simple assertions: one that validates form field in HTML, and another that scans the content for various code injection signatures:

The form data assertion allows me to impose a series of tight constraints on the content of query parameters. In effect, it let’s me put a structural schema on an HTTP query string (or POST parameters). I’m going to be very strict here, and explicitly name every parameter I will accept, to the exclusion of all others. For the Yahoo search API, this includes:

-

appid

-

query

-

output

-

callback

The latter does some wrapping of the return request to facilitate processing in JavaScript within a browser:

Depending on my security requirements, I could also be rigorous with parameter values using regular expressions as a filter. I’ll leave that as an exercise for the reader.

Depending on my security requirements, I could also be rigorous with parameter values using regular expressions as a filter. I’ll leave that as an exercise for the reader.

Naturally, I’m concerned about REST-born threats, so I will configure the code injection assertion to scan for all the usual suspects. This can be tuned so that it’s not doing unnecessary work that might affect performance in a very high volume situation:

That’s it–we’re done. A simple 6 assertion policy that handles confidentiality, integrity, authentication, authorization, schema validation, threat detection, and visualization of RESTful JSON services. To call this, I’ll again borrow directly from the Yahoo tutorial, using their HTML file and simply change to URL to point to my gateway instead of directly to Yahoo:

That’s it–we’re done. A simple 6 assertion policy that handles confidentiality, integrity, authentication, authorization, schema validation, threat detection, and visualization of RESTful JSON services. To call this, I’ll again borrow directly from the Yahoo tutorial, using their HTML file and simply change to URL to point to my gateway instead of directly to Yahoo:

<html>

<head>

<title>How Many Pictures Of Madonna Do We Have?</title>

</head>

</body>

<script type="text/javascript">

function ws_results(obj) {

alert(obj.ResultSet.totalResultsAvailable);

}

</script>

<script type="text/javascript" src="https://scottssg:8443/yahooImageSearch?appid=YahooDemo&query=Madonna&output=json&callback=ws_results"></script>

<body></body>

</html>

Still can’t get over how many pictures of Madonna there are.

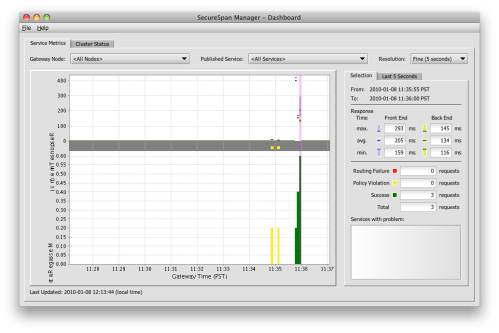

I ran it a few times and here’s what it looks like in the dashboard. I threw in some policy failures to liven up the display:

So where can we go from here? Well, I would think about optimization of the policy. Depending on predicted loads and available hardware, we might want to check for code injection and validate the schema before performing authentication, which in the real world would likely call out to an LDAP directory. After all, if we are being fed garbage, there’s no sense in propagating this load to the directory. We can add SLA constraints across the service to insulate back end hosts from traffic bursts. We could also provide basic load distribution across a farm of multiple service hosts. We might aggregate data from several back-end services using lightweight orchestration, effectively creating new meta-services from existing components. SecureSpan Gateways provide over 100 assertions that can do just about anything want to an HTTP transaction, regardless of whether it contains XML or JSON data. You can also develop custom assertions which plug into the system and implement new functionality that might be unique to your situation. Remember: when you are an intermediate, standing in the middle between a client and a service–as is the case with any SecureSpan Gateway–you have complete control over the transaction, and ultimately the use of the service itself. This has implications that go far beyond simple security, access control, and monitoring.